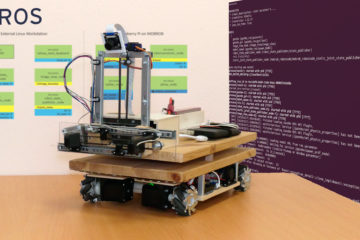

In this article, I present the central concept of the robot control of my mobile robot. There are many different variants to structure the software for a robot. I will show you which one I implemented in MOBROB.

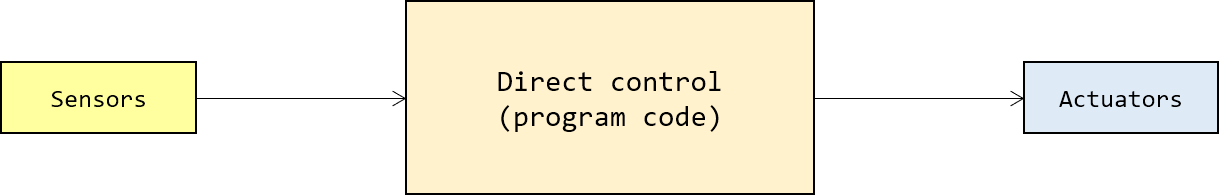

Direct control

The simplest option is the so-called direct control. Here, a direct control program regularly reads out the sensor data and then controls the actuators. The disadvantage here, however, is that this quickly creates an unmanageable complexity due to the differentiation of many states and competing procedures.

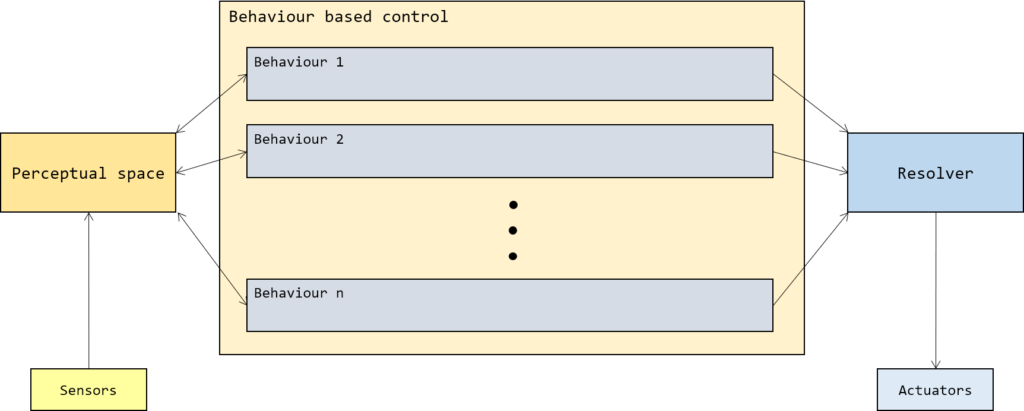

Behaviour-based control

An alternative possibility is a behaviour-based control, this was already described in 1997 by Kurt Konolige and Karen Myers as Saphira architecture. The idea is to have elementary behavioural patterns that form the foundation stones for intelligence. For example:

- Food intake

- Obstacle avoidance

- Exploratory behaviour

The behavioural patterns (behaviours) are independent of each other and can therefore be applied in any number and sequence specific to the situation. The behavioural patterns calculate desired outputs (desires) for the actuators. The so-called resolver then combines the desires into an absolute command for the actuators.

Depending on the application of the mobile robot, the processing status, and the robot environment, a strategic level activate and deactivate the behaviour patterns. With today’s application complexity, a finite state automaton can represent the strategic level.

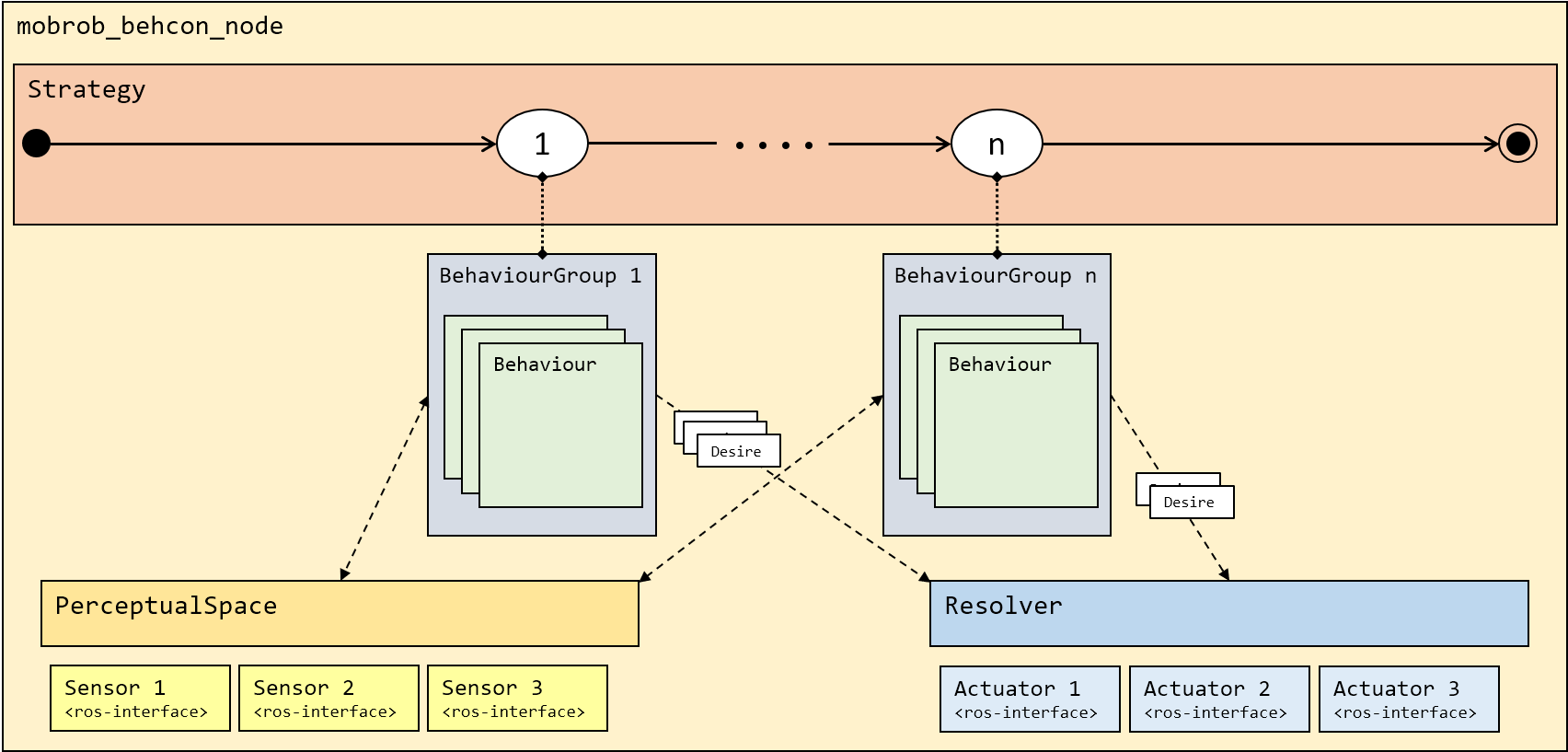

Behaviour-based control in MOBROB

Specifically, in implementation, behavioural pattern control in MOBROB looks like this. The superclasses are Behaviour, BehaviourGroup, Desire, Resolver, PerceptualSpace and Strategy, which I would now like to introduce in detail.

Behaviour

Superclass for behaviours with method fire(), which is called cyclically every 100 ms by the resolver. In this method, the specific tasks of the behaviour can be executed, as a result of which an arbitrary number of desires are generated as action proposals for the actuators and sent to the resolver.

BehaviourGroup

The BehaviourGroup groups different behaviours so that the strategy can activate all behaviors at once.

Strategy

Behaviours provide low-level control for the physical actions influenced by the system. Above this level, there is a need to associate behaviours with specific goals and objectives that the robot is expected to meet. This management process involves deciding when to activate/deactivate behaviours as part of the execution of a task, as well as coordination with other activities in the system.

In the software of the MOBROB, this role fills the Strategy class. It contains a finite state machine with the method plan(), which is called cyclically every 100 ms. This is where the activation and deactivation of the various behaviours are performed.

Desire

Desires are action proposals for actuators sent from the behaviours to the resolver.

A desire consist of

– a value (any number/array),

– a dynamic strength (0.0 – 1.0) and

– a static priority (0 – 100)

– a function to be executed if the desire is accepted (optional)

The priority will be set when Desire is forwarded to the resolver.

PerceptualSpace

The perceptual space is the only contact to the robot’s perceptual information for all behaviours. Each behaviour has access to this information. This information can come directly from sensors or preprocessing unit.

Resolver

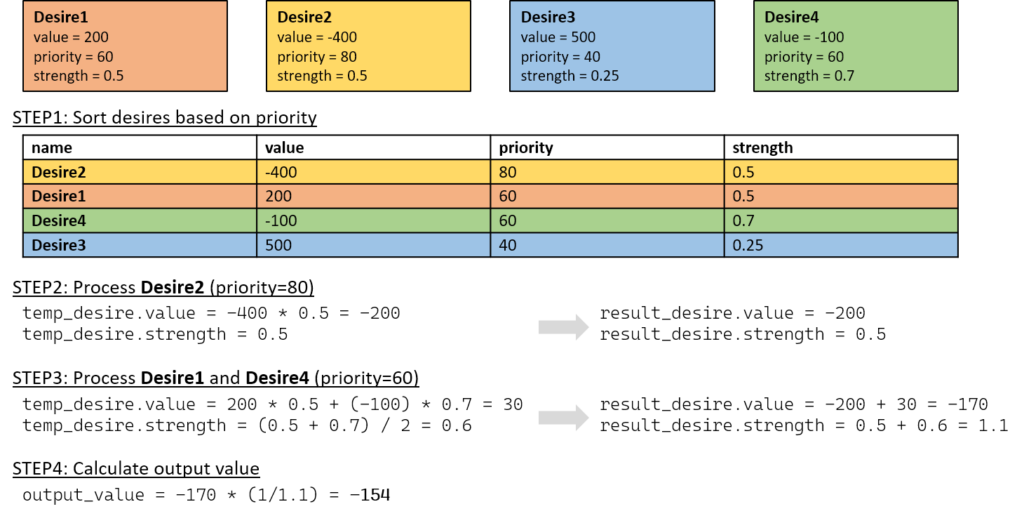

The resolver links the desires according to their static and dynamic priorities.

- Static priorities (priority) are set once when adding the behaviours to the group.

- Dynamic priority (strength) is recalculated each time the fire() method is evaluated.

The Algorithmus of the resolver is described in the following code snippet. It is a simple defuzzification function (weighted average) to combine the different desires to one output value.

# sort list of desires based on priority (high to low)

lst_desires.sort(key=lambda x: x.priority, reverse=True)

# create resulting desire (mostly used to track the value and strength during next calculations)

result_desire = Desire(0, 0)

# declare variable to count desires with the same priority

num_desire_prio = 0

# declare index variable for outer while loop

i = 0

# loop through list of desires while result_desire.strength is smaller than 1.0

while result_desire.strength < 1.0 and i < len(lst_desires):

# reset counter variable

num_desire_prio = 0

# create temporary desire to calculate weighted mean of desires with same priority

temp_desire = Desire(0, 0)

# loop through list of desires and find desires with same priority

for k in range(len(lst_desires)):

if lst_desires[k].priority == lst_desires[i].priority:

# add weighted value and strength of selected desire to temporary desire

temp_desire.value = temp_desire.value + lst_desires[k].value * lst_desires[k].strength

temp_desire.strength = temp_desire.strength + lst_desires[k].strength

num_desire_prio = num_desire_prio + 1

# calc average of the strengths

temp_desire.strength = temp_desire.strength / num_desire_prio

# add strengths and value to result_desire

result_desire.value = result_desire.value + temp_desire.value

result_desire.strength = result_desire.strength + temp_desire.strength

# jump over all desires with the same priority in list

i = i + num_desire_prio

# calculate actual output value

output_value = result_desire.value / result_desire.strength

Here is an example with four desires, which will be processed by the resolver:

ROS-Package: mobrob_behcon

I implemented this behaviour-based control architecture in a Python ROS package mobrob_behcon to integrate it with the MOBROB architecture described in another post. The definition of the abstract superclasses and the modularization within this behaviour-based software architecture allows easy describing the appropriate configuration for the desired tasks in the main file. The different behaviours and desires can be reused. By creating different strategies, different tasks can be handled without having to rewrite the complete program. Subscribers to ROS topics are handled in submodules of PerceptualSpace so that the information can be requested by any Behaviour in the system. Also, the Resolver or its submodules are the only part, where the publishers are placed so that the output of different behaviours can not lead to conflicts.

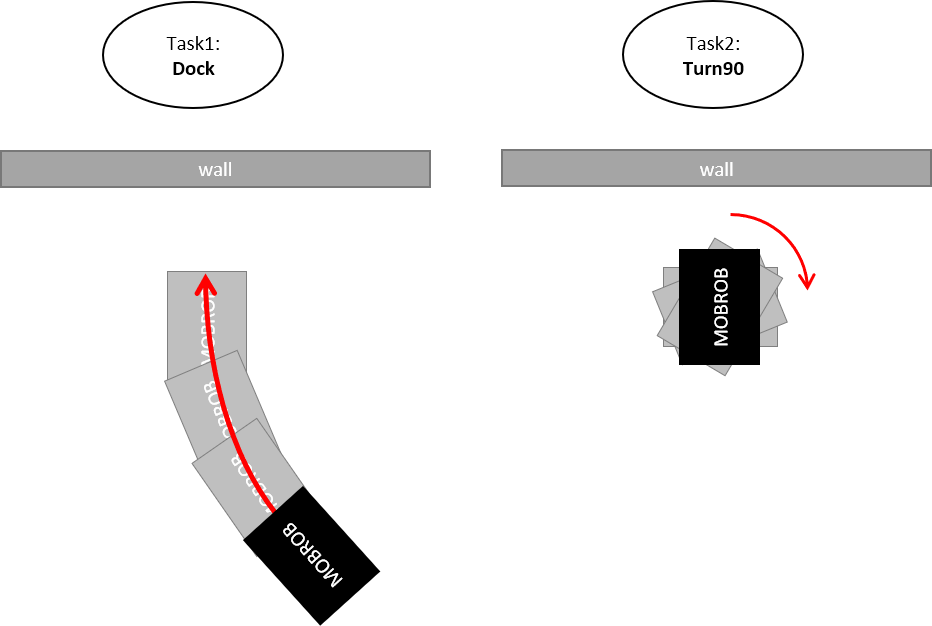

Here you can see how such a main file for the application RightAngleDock can look like, which in the first step aligns a robot right-angled on a wall and then performs a 90 degree turn to the right:

#!/usr/bin/env python

import rospy

from mobrob_behcon.behaviours.behaviourgroup import BehaviourGroup

from mobrob_behcon.behaviours.behaviour import Behaviour

from mobrob_behcon.behaviours.beh_align import BehAlign

from mobrob_behcon.behaviours.beh_transvel import BehConstTransVel

from mobrob_behcon.behaviours.beh_limfor import BehLimFor

from mobrob_behcon.behaviours.beh_stop import BehStop

from mobrob_behcon.behaviours.beh_turn import BehTurn

from mobrob_behcon.strategies.rd_strategy import RDStrategy

from mobrob_behcon.core.behcon_node import BehConNode

if __name__ == '__main__':

# create robot

robot = BehConNode("mobrob_node")

# create behaviours

cv = BehConstTransVel("ConstTransVel", trans_vel=0.2)

tu = BehTurn("Turn", -90)

lf = BehLimFor("LimFor", stopdistance=0.2, slowdistance=0.5, slowspeed=0.1)

al = BehAlign("Align", tolerance=0.020, rot_vel=0.2)

st = BehStop("Stop", stopdistance=0.3)

# create behaviourgroups

dock = BehaviourGroup("Dock")

dock.add(lf, 80)

dock.add(cv, 50)

dock.add(al, 75)

dock.add(st, 80)

robot.add_beh_group(dock)

turn90 = BehaviourGroup("Turn90")

turn90.add(tu, 80)

robot.add_beh_group(turn90)

# create strategy

robot.add_strategy(RDStrategy(dock, turn90))

# start program

robot.start()

For the interested people: Check out my repository on GitHub to find more information about the source code. This is a living repository, which will grow as I develop more tasks for the robot.

https://github.com/techniccontroller/MobRob_ROS_behcon

I also created complete Sphinx documentation of my source code. Every child class of the above-presented superclasses is also documented there.

https://techniccontroller.github.io/MobRob_ROS_behcon/

Additional components for Visualisation

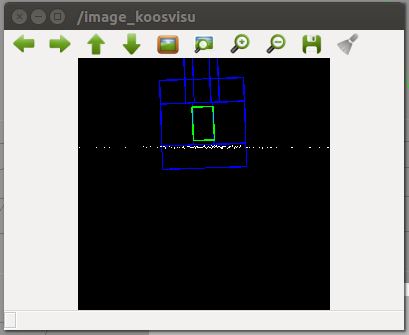

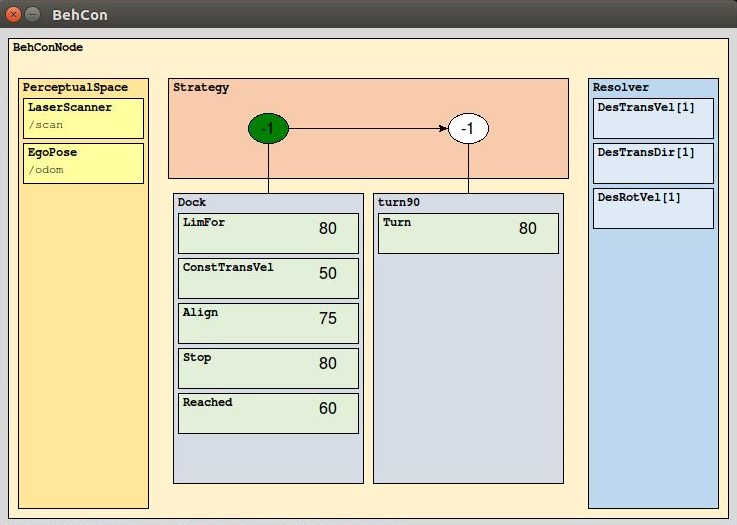

For debugging and creating new behaviours it is helpful to have a visualisation of what’s going on in the software. Therefore I created two visualisation classes: KOOSVisu and VisuBehCon.

KOOSVisu

This component represents a visualization of the robot environment from a bird’s eye view. The different classes like Laserscanner or Behaviour can draw on the canvas. The class implements a ROS Publisher to publish the canvas as a video stream.

VisuBehCon

This component visualizes the current software configuration of the behaviour based control of the robot. This includes the strategy and all behaviours with their priorities. During runtime, it will also update the current state of strategy and the output to the resolver.

References

Konolige, Kurt & Myers, Karen & Ruspini, Enrique & Saffiotti, Alessandro. (1997). The Saphira Architecture: A Design for Autonomy. Journal of Experimental & Theoretical Artificial Intelligence. 9. 10.1080/095281397147095.

KONOLIGE, Kurt; MYERS, Karen. The Saphira architecture for autonomous mobile robots. Artificial Intelligence and Mobile Robots: case studies of successful robot systems, 1998, 9. Jg., S. 211-242.

0 Comments